Entity Framework is a built-in ORM in .NET. It’s great to work with. It handles the complete data access for you. (Connection, query generating, executing of SQL commands etc.)

The performance is OK, if you don’t work with lot of data. The current release (.NET 4.0) doesn’t support batch processing.

That means: If you add 1000 of new Entites the Framework will generate 1000 Insert Statements. This slows down your application!

Fortunately, there is a way to implement your own code before the 1000 queries will be generated.

How it works:

| First you have to register the SavingChanges event from the ObjectContext. |

| Inside the registered method you have to get all new added entites from the ObjectStateManager. |

| Generate a bach Insert Statement. |

| Send the SQL statement to the database. |

Here is the code:

class Program

{

static void Main(string[] args)

{

// Create object of database context

TestDBEntities dbContext = new TestDBEntities();

// Register the event

dbContext.SavingChanges += new EventHandler(dbContext_SavingChanges);

// Generate 500 new entites and add them to the context.

for (int nUp = 0; nUp < 500; nUp++)

dbContext.AddTotbl_person(new tbl_person()

{

id = nUp,

nachname = "micic " + nUp.ToString(),

vorname = "darko" + nUp.ToString()

});

Stopwatch watch = new Stopwatch();

watch.Start();

// Save the changes.

dbContext.SaveChanges();

watch.Stop();

Console.WriteLine("Elapsed ms: " + watch.ElapsedMilliseconds.ToString());

}

static void dbContext_SavingChanges(object sender, EventArgs e)

{

TestDBEntities db = (TestDBEntities)sender;

// Get new entities

IEnumerator<ObjectStateEntry> stateEntries = db.ObjectStateManager.GetObjectStateEntries(System.Data.EntityState.Added).GetEnumerator();

StringBuilder sql = new StringBuilder();

string propVal = string.Empty;

while (stateEntries.MoveNext())

{

EntityObject newObj = (EntityObject)stateEntries.Current.Entity;

Type objType = newObj.GetType();

// Generate sql query (fields)

if (sql.Length == 0)

{

sql.Append("INSERT INTO tbl_person(");

foreach (PropertyInfo prop in objType.GetProperties())

{

if (prop.Name.ToLower() == "entitykey" || prop.Name.ToLower() == "entitystate")

continue;

sql.Append(prop.Name + ", ");

}

sql.Remove(sql.Length - 2, 2).Append(") ");

}

// Generate sql values

sql.Append("SELECT ");

foreach (PropertyInfo prop in objType.GetProperties())

{

if (prop.Name.ToLower() == "entitykey" || prop.Name.ToLower() == "entitystate")

continue;

if (prop.PropertyType == typeof(string))

propVal = "'" + prop.GetValue(newObj, null).ToString() + "'";

else

propVal = prop.GetValue(newObj, null).ToString();

sql.Append(propVal + ", ");

}

sql.Remove(sql.Length - 2, 2).Append(" UNION ALL ");

}

sql.Remove(sql.Length - 11, 11);

// open a connection and send the sql statement.

using(DbConnection dbCon = new SqlConnection("Data Source=...; Initial Catalog=TestDB; Integrated Security=SSPI;"))

{

using(DbCommand dbCmd = dbCon.CreateCommand())

{

dbCmd.CommandText = sql.ToString();

dbCon.Open();

dbCmd.ExecuteNonQuery();

stateEntries.Reset();

while (stateEntries.MoveNext())

stateEntries.Current.AcceptChanges();

}

}

}

}

The code shows only how it works for this specific case! If you want to build a generic "query generator" you have to take some points into account:

| The database table name can differ from the entity name. (With the current API you can't find out the table name of the entity.) Maybe you can parse the EDMX file. |

| A entity doesn't have to map a table. (You can spilt a table into multiple entities) |

| Auto generate key. (With the current API you can find out which fields build a primary key and if the are auto generated. |

| Relationship handling. (Parent-Child..) |

| DB Provider (MSSQL, Oracle, MySQL usw.) |

| Sending batch queries in packages |

| Some other things I forgot 🙂 |

I tested my code above and came to the following result.

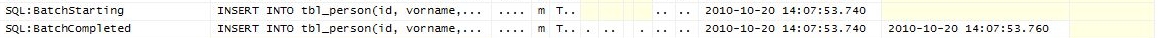

Adding 500 entites with the batch extension:

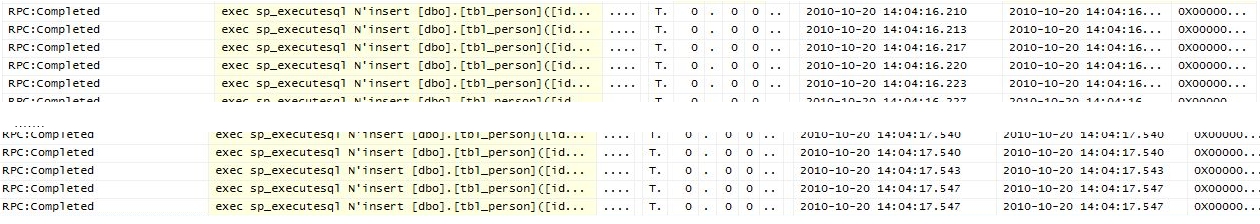

Adding 500 entites without the batch extension (just EF):

I used the SQL Profiler for tracing the traffic. As you see, the batch extension send just one (batch) query.

INSERT INTO tbl_person(id, vorname, nachname) SELECT 0, 'darko0', 'micic0' UNION ALL SELECT 1, 'darko1', 'micic1' UNION ALL SELECT 2, 'darko2', 'micic2' UNION ALL ...

And the Entity Framework sends FOR EACH new entity a query.

INSERT INTO tbl_person(id, vorname, nachname) VALUES(0, 'darko0', 'micic0') INSERT INTO tbl_person(id, vorname, nachname) VALUES(1, 'darko1', 'micic1') INSERT INTO tbl_person(id, vorname, nachname) VALUES(0, 'darko2', 'micic2')

How about the performance:

| Batch processing extension | 110ms |

| Entity Framework | 1200ms |

You see... My extension is much faster.

I recommend to write extension only in specific cases, where you have to insert a lot of data at once.

You have a lot do to if you want to build a generic batch extension. And I'm not sure if you reach the required information with the current EF.NET API for this.

And the next thing I'll improve is my english 🙂